This is the second blog post of two about the Power Platform Saturday Sweden event which was held on the 5th of October. In the first one you can read about it from an attendee’s point of view. In this blog post I will let you in on the session that me and my colleague Carl Aspernäs held. Actually, I put in some new tips in here as well, which we did not mention during our session. So even if you did attend our session, you might learn something new from this blog post.

Building up the session towards a demo

Our session was built up of five different parts:

- Implementations now and then

- Data Integration – Functional Point of View

- The Evolution from Data Integrator for Admins to Dataflows

- Deep-dive into Dataflows

- Demo time

Implementations now and then + Functional Point of View

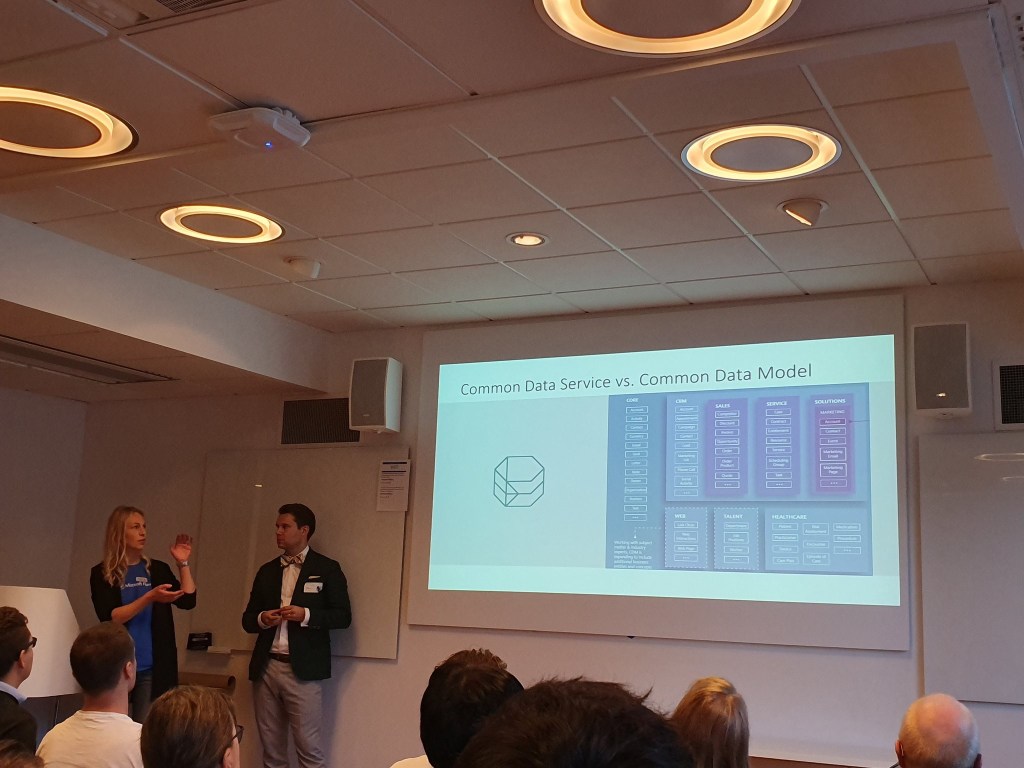

First some general talk about how projects and data integration have evolved from earlier to now. Then Dataflows and Data Integration from a functional perspective – where we talked about the ”What”, ”Why” and ”When”, mostly related to Dataflows actually. But before the “What”, “Why” and “When” we went through some basic concepts, like explaining the CDS and CDM.

We pointed out that you can take a look at the names, the Common Data Service is a service which lets you store data in the cloud. The Common Data Model is the entity model which is the structure of entities related to each other and provided to us by Microsoft. The core entities are the built in entities and then we have some entities from the so called first-party apps like Sales etc. This model can be extended and that is when we create custom entities.

During a chat between MVP Jonas Rapp and MVP Mark Smith, Jonas came up with a lovely explanation. I did not mention this during the session, but I will add it here since I like it a lot:

The CDM is like the map and the CDS is the landscape we walk on

On to the “What”, “Why” and “When”.

What – A service which lets you take data from several different sources, transform it and transfer the data into the Common Data Service or Azure Data Lake (Gen2), no-code / low-code. You can also keep the data refreshed on a schedule.

Why – What is the purpose of Dataflows?

The vision from Microsoft

Democratize data (integration) for business users, so that it is seamless to extract, transform, and load data into Common Data Service and Azure Data Lake Storage from any data source, and to provide structure and meaning to that data through the Common Data Model.

Under the “Why” we also pointed out that Microsoft simply wants to make our lives easier by providing us with a service which: 1. Reduces the time, cost, and expertise required to prepare and aggregate data for analytics. 2. Helps organizations unify data from different sources. Simply they are giving us a no-code tool/low-code tool for shaping data into well-structured, actionable information which can be used in our Apps, AI workloads or analytics.

When – When can it be useful?

Here we pointed out that we just said that Dataflows can be used in order to get data into the CDS and you can then use the data in your apps. But what does that really mean? Well, nowadays the “CRM-parts” in Dynamics 365 actually are apps built on the Power Platform – Model-Driven Apps – and the data is stored in the CDS. That basically means that you can use Dataflows in order to transfer information from other sources into your CRM-system or to your other solutions built on the CDS (former “xRM solutions” for all of you former xRM/CRM enthusiasts).

Do have in mind that we also have Canvas Apps. All of you who read my blog post about how to make it from Model-Driven Apps to Canvas Apps knows that one part of PowerApps are Model-Driven Apps and those are basically the same as what we former Dynamics CRM enthusiast used to work with. The other part is Canvas Apps. Canvas Apps can also have the CDS as data source, which means that you can use Dataflows to get data into your Canvas Apps. (Here you might want to consider choosing another data source than the CDS though rather than having a Dataflow transferring the data to the CDS.

Below follows three different scenarios for the “When”.

Enrich existing data – A possible scenario is to enrich existing data that you have in your apps. To set up Dataflows which run on a schedule and transfers data from a data source into the CDS to enrich e.g. the Account entity, another entity of your choice or simply fill one or several custom entities with data from another data souce and let those entities extend your entity model and let those entities relate to e.g. the Account entity.

Of course you need to think about the overall solution and perhaps it would be better with something else than Dataflows. Even if we are talking about a Model-Driven App, perhaps in your case it is a better choice only to display the data and not have it stored within the CDS, then you could look into Virtual Entities. Another choice is to display the data in an embedded Canvas App.

In order to find the best solution you should e.g. think about what you need to do with the data. Do you need to have some actions based on the data, like send an e-mail or create activities when xxx occurs? Do you need to have the data available so that you can perform searches and set filters based on it? Or do you just want to display some data related to certain records? You also need to have in mind that with Dataflows it is just one direction, data INTO the CDS.

Big amount of data – Another scenario would be to gather information from different sources and work with that data as a first step in order to prepare for data analysis.

Train AI models – A third scenario, very niched, is to train an AI Model and use Dataflows to enable this.

After the “What”, “Why” and “When” we took a few steps back and looked at the feature background/history and with that we also talked about how Dataflows is related to Data Integration in Power Platform.

The evolution from Data Integration to Dataflows

Where did Dataflows come from and how is it related to Data Integration in Power Platform? These questions were answered during this part of the session.

The 2019 Release Wave 2 gave us something called Dataflows, but the concept has been here for some time now. For about 2 years ago Microsoft introduced something called the Data Integrator for Admins into the Power Platform. It was presented as an integration service you could use in order to integrate data from different sources into the CDS.

The Data Integrator for Admins still exists and you can find it in both Admin centers: https://admin.powerapps.com and https://admin.powerplatform.com.

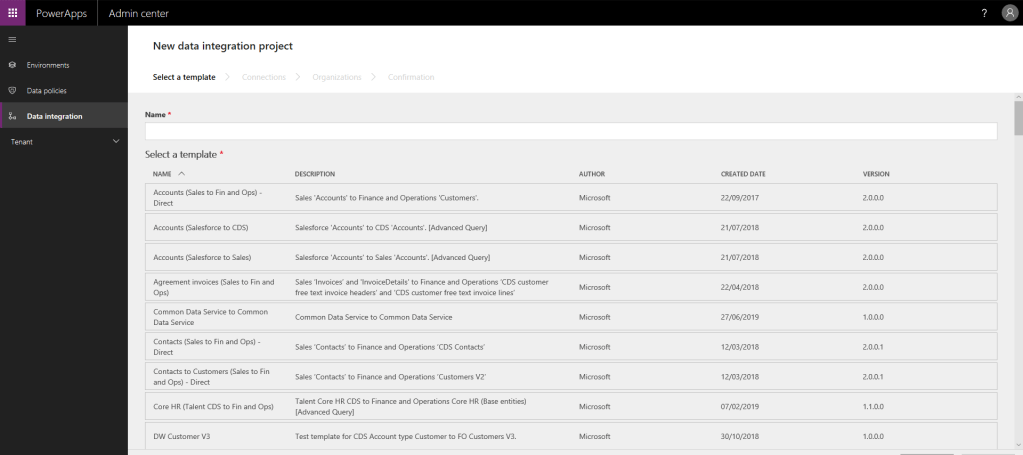

There are templates that can be used, you set up connection sets (credentials for source and destination) and then you create integration projects.

The template are pre-defined mappings for integrating different parts of the Dynamics 365 family, such as Sales, Finance & Operations, Fields Service, Project Service and Talent. But there are also other templates, e.g. for integrating Salesforce with the CDS.

One thing we did not mention during our session, but which I have found, is that if you use https://admin.powerapps.com you see the templates. For some reason I do not do that in the other admin center- i.e. in the new one.

For about a year ago I started to look at Data Integration in Power Platform. I have written about it before, in a series of three blog post, starting here. When I started to look at it, at first it was a bit confusing, since I found Data Integration in both Maker Portal and in the Admin center. There was not much to read about this subject either.

Basically it turned out to be two different user interfaces to the same service. In the admin center you had the connection sets, templates and integration projects. In maker portal you only had integration projects and you started from a data source. Back then both were called Data Integration and you created Data Integration projects which was shown in the Admin center no matter of where you started (Maker Portal och Admin center). Now we have a somewhat more clear distinction between the two since in Maker Portal it is now called Dataflows.

From my experience Data Integrator for Admins is better suited for when integrating different parts form the Dynamics 365 family, e.g. Dynamics 365 Sales with Dynamics 365 Finance and Operations. If I were to plan such an integration I would look into the Data Integrator for Admins.

Dataflows however, I think is better suited for transferring from other different sources into the CDS. I have found them easy to work with both when using an SQL server (on-premises) as well as Web API as data source. Once again, do have in mind this is only one-direction, i.e. data into the CDS.

About integrating the different parts of the Dynamics family, one thing that might be good to know is that in the Release Wave 1 2019 something called Dual Write was introduced. In the release plan for 2019 Release Wave 2 you can read:

“Whereas Data Integrator is a highly customizable batch-based integration service, Dual Write is a near-real-time integration service that allows Finance and Operations customers to natively get their data in Common Data Service.”

Remember, Dynamics 365 Sales also has its data in the CDS, which means that the end user experience is supposed to be like this: when updating an account in one system – the account is immediately updated in the other. I would recommend to look more into this if you want to integrate Dynamics 365 Sales with Dynamics 365 Finance and Operations.

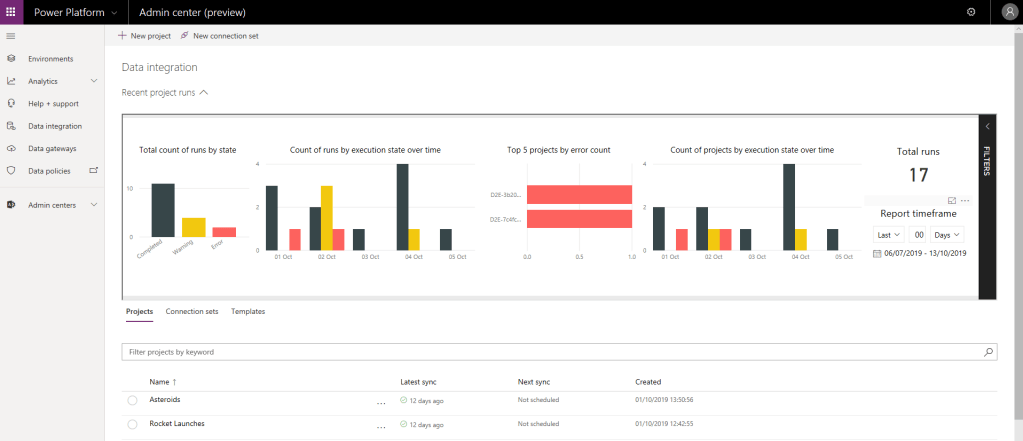

Back then, when I started to look at Data Integration in Power Platform, as well as now, you have all the run (and scheduled) integrations jobs and Dataflows in the admin portal under Data, Data Integration.

It is easy to miss this fact, that when working with Dataflows and a Dataflow does not run correctly you might find detailed error messages in the Admin portal. Do not forget to take a look in there if you want to find out why your Dataflow was not run correctly. Just click on your project, Execution history and open the one with an error.

However, if it is the first time you run a certain Dataflows and it fails, nothing will be shown in the admin portal. Then you simply need to narrow down the scope of the Dataflow, try to insert/update just one or a few fields and you can add several later and then hopefully you will get something in the error log for what was wrong from the beginning.

What could go wrong then? Here are a few things that might cause the Dataflow not to run correctly.

- The data types of the source and destination for a certain field do not match

- The data from the source for a certain field contains more characters then what is allowed for the destination field.

- A certain lookup value that you are trying to set cannot be found

- Your source cannot be reached at the moment

When having the Dataflows scheduled, you can type in an e-mail address for each scheduled Dataflow and there will be an e-mail sent to this e-mail address in case of an error.

That was all we said about the Data Integrator for Admins. Well not all of this actually, I added a few things here which I did not mention on our session. Anyway, the rest of the session was dedicated to Dataflows. Before the big finale with the demo, we made a deep-dive into Dataflows.

Deep-dive into Dataflows

Here we started with an overview of Dataflows. It is a picture that I put together, which shows that with Dataflows you can have online data sources (e.g. Azure SQL databse, Azure SQL Data Warehouse, SharePoint lists online, Excel/Text/XML/PDF files which are located on SharePoint online) or on-premises data sources (e.g. SQL Server on-premises, SharePoint lists on-premises, other on-premises databases etc.). If you have an on-premises data source you will need to install a Data Gateway on that source in order to be able to setup a Dataflow.

It also shows that your destination can be of two different types, either the Common Data Service or an Azure Data Lake (Gen2).

From those destinations you can then use the data in your Microsoft Flows, PowerApps, Power BI or for other analytical purposes.

We also went through a good-to-know slide where we pointed out that using alternate keys is the only way to go if you want to set lookups, at least from my experience. It is also a good idea to use these in order to avoid duplicates.

We also pointed out that you should pay attention to data types when you setup your Dataflow. When creating a Dataflow with the CDS as destination you can choose to either write to an existing entity in the CDS or to have the Dataflow create an entity for you. Either way you should make sure that the data fits your destination. You can modify the data types with Power Query when setting up the Dataflow. The types will not changes in the source, it is just a way to transform the data when the Dataflow is run.

I went through some current limitations and pointed out that there are some info in the release plan which contains clues that ALM might be on its way – at least for the Data Integrator for Admins. At this momest there is no way to package Dataflows in solutions and no other way either to move a Dataflow from one environment to another (e.g. Dev – Test – Production). Hopefully in the future!

Documentation

We had a slide with links. As already mentioned, there is not much to read about this subject. This is what I have gathered so far.

Data integrator

https://docs.microsoft.com/en-us/power-platform/admin/data-integrator

Dataflows

https://docs.microsoft.com/en-gb/data-integration/dataflows/dataflows-integration-overview

Articles from the PowerApps blog

Sept 19th What’s new – Dataflows and Data Integration

May 20th Announcing Dual Write

Jan 16th Data Integrator Updates

Release Plans

Take a look at the section “Data Integration & CDM” in the latest 4 release plans/notes. I’ll just provide the links to these chapters here:

Demo time

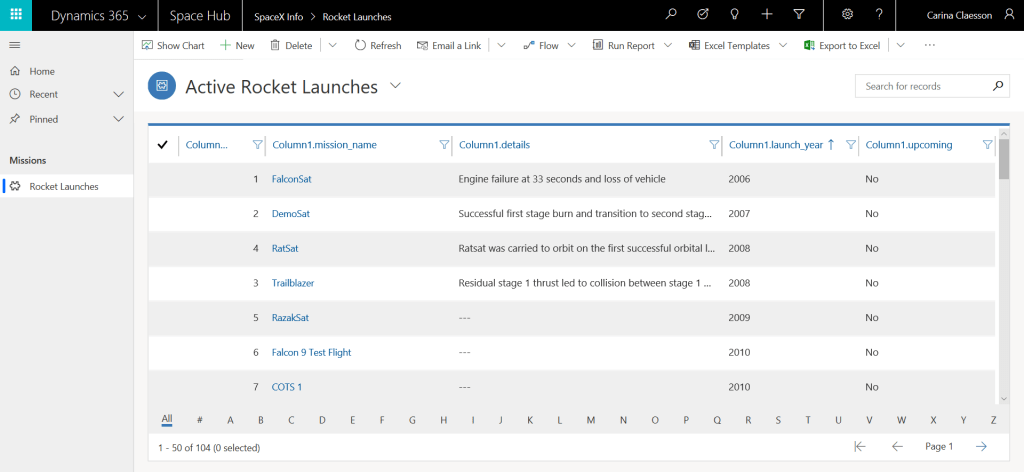

Then it was demo time. We started from an empty environment (it was a Dynamics 365 for CE 30 days trial environment). Both Nasa and SpaceX have a lot of interesting data available in open APIs. In my demo I set up a Dataflow which had one of the SpaceX open APIs as data source (Rocket Launches).

I chose to let the Dataflow create the entity “Rocket Launch” when running it for the first time – but pointed out that currently I would recommend to create the entity model before you run your Dataflow, since you do not get to choose your own prefix when letting the Dataflow create entities.

After the Dataflow had run I added some fields to the form and to one of the views (using the new experience for modifying forms and views of course) and then I created a Model-Driven App, not “Sales Hub”, but “Space Hub”, in which you could work with your Rocket Launch data.

The column names in the below picture are not pretty, but it is pretty amazing how quicky you can get data from an open API into the CDS and display it in a Model-Driven App.

If you would like to try this yourself, you can follow the steps in my previous blog post where I did something similar but with another API.

Conclusion

I am so happy that I got to be a part of this event. It was such an honour beeing there as a speaker. The PEOPLE was what made this day so special. All the energy and enthusiasm about what we all work with and all willingness to share our knowledge and competences, that is simply amazing to see. It was really great to meet up with old friends and colleagues as well as with new faces in the audience during the day and I very much enjoyed that I got the chance to hang out with the other speakers. Such brilliant people!

I was happy to see a lot of familiar faces on our session and also happy to see that all the seats were taken. I hope the audience enjoyed our demo and learned something new during our session.

Thanks for reading about our session. If you were there, did you noticed I (temporarily) modified my Microsoft Flow t-shirt?!

Here is a link to where you can find our presentation as well as the other presentations from PPSatSwe.

1 thought on “Power Platform Saturday Sweden – from a speaker’s point of view – Introduction to Dataflows and Data Integration”